Srsly Risky Biz: Is Claude Too Woke For War?

Your weekly dose of Seriously Risky Business news is written by Tom Uren and edited by Amberleigh Jack. This week's edition is sponsored by Socket.

You can hear a podcast discussion of this newsletter by searching for "Risky Business News" in your podcatcher or subscribing via this RSS feed.

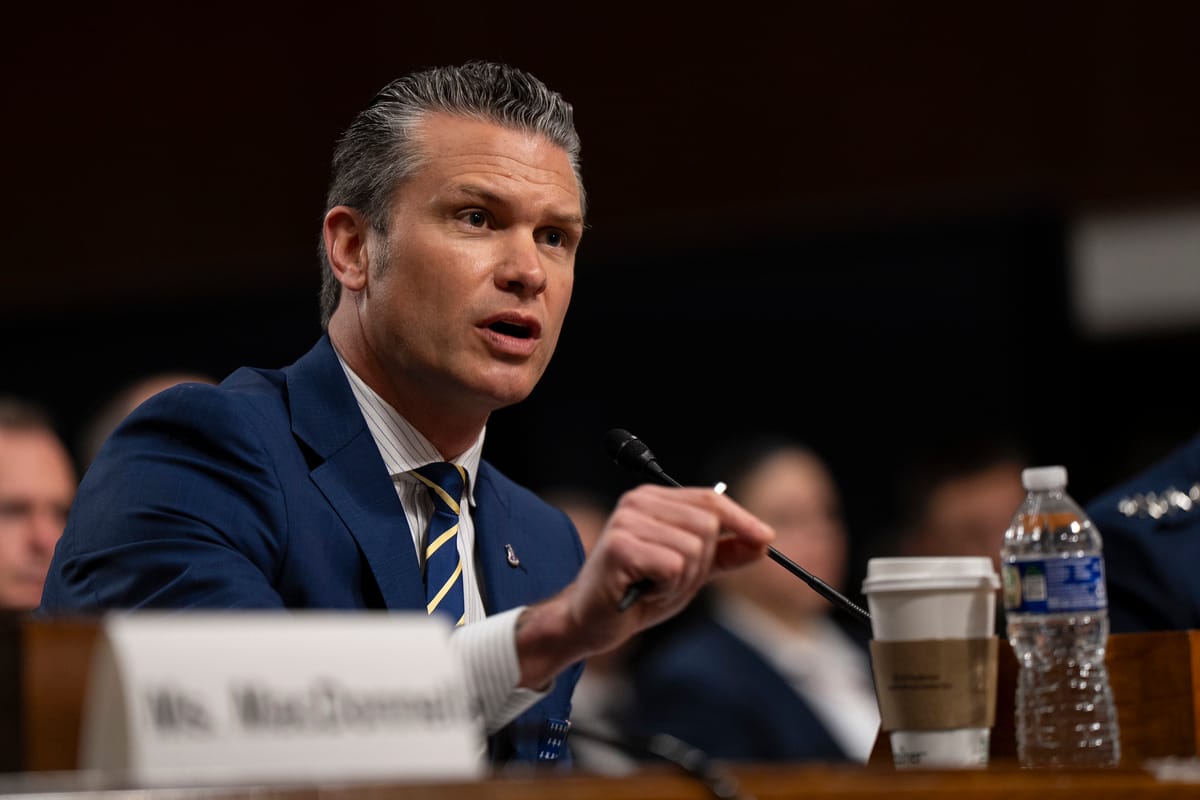

This week, US Defense Secretary Pete Hegseth delivered an ultimatum to Anthropic that it allow unrestricted military use of its AI models by Friday or face harsh punishments. This begs the question: When it comes to military use of AI, who exactly should be setting the rules?

At issue for the Department of Defense are safeguards intended to prevent accidental or malicious use of AI. The Pentagon argues that AI is no different from any other technology and decisions about how it is used should be left to the military.

In mid-January, Hegseth spoke about accelerating AI deployment within the War Department and eliminating barriers that prevent deploying the technology to the battlefield. Hegseth railed against "equitable AI, and other DEI and social justice infusions that constrain and confuse our employment of this technology… We will not employ AI models that won't allow you to fight wars."

So far, Claude is the only model to have been approved for DoD classified work, although the Pentagon this week negotiated a deal for xAI's Grok. Regarding Claude, one defence official told Axios "we need them and we need them now", because, "they are that good". If Anthropic doesn't cave, Hegseth has reportedly threatened to either force the company to remove its limits by invoking the Defense Production Act, or declare Anthropic a supply chain risk and freeze it out of the DoD's supply chain. It's like an abusive relationship: we must have you or no one can.

We have some sympathy with Hegseth's position here, if not his methods. Claude's constitution, known within Anthropic as its soul document, was published the week following Hegseth's speech. Parts of it, such as prohibitions on harming others, are clearly not practical for the military.

However, Anthropic has stated that Claude's constitution doesn't apply to special purpose models. When it comes to military uses, CEO Dario Amodei has only specified two hard red lines. He said models should not be used for the mass surveillance of Americans or in autonomous weapons that fire without a human in the loop. Anything else is fine.

That's hardly transforming Claude into a social justice warrior singing Kumbaya around the campfire!

Regardless, there is a strong case that rules about military use of AI should be in the hands of Congress, rather than being decided in an argy-bargy between the Secretary of Defense and a private sector CEO. The Pentagon's Chief Technology Officer Emil Michael acknowledged this when he framed Anthropic's position as "undemocratic".

Congress writes bills, the President signs them and agencies comply, he said. "What we're not going to do is let any one company dictate a new set of policies above and beyond what Congress has passed".

This Lawfare article expands on arguments for Congressional involvement. One of the more compelling ones is simply that Congress's role is to make decisions when difficult tradeoffs are involved.

We agree, but would add that much of the disagreement between the Pentagon and Anthropic has arisen because they have different views of what Claude is. The Pentagon views it as a tool that should be available to deploy whenever and wherever it likes. Anthropic views it as an entity, like an idiot savant that can do amazing things but needs special training or guidance to help it work better. That's what Claude's constitution is for. It is incorporated into the training of mainline models to help them make better decisions.

The distinction between entity and tool becomes more important as models become more capable and are trusted with more important jobs. Military personnel are indoctrinated with military values, so a machine intended to replace human decision-making needs to understand those values too. That means that appropriate training for military models would not just be removing Claude's constitution, it would involve replacing it with something else.

We have no idea what Claude's "warrior ethos" will look like as it takes shape over time, but it will surely be more nuanced than any black-and-white legislation to be passed by Congress. This is a fast-moving space in which training methodologies and DoD uses will change quickly over time.

We think some hard-and-fast rules will be worth legislating, but Congress should also take a mentoring role. It needs to find transparency and oversight mechanisms that ensure both the DoD and companies are developing and using models in ways that reflect American values and support US interests.

US Ostrich Strategy No Match for Volt Typhoon

Last year, in a rare moment of apparent good news, the US government announced that it had successfully dealt with Volt Typhoon. As it turns out, declaring victory is not the same thing as actual victory, and unfortunately the head-in-sand strategy encourages the private sector to just ignore the threat.

Volt Typhoon is the Chinese hacker group prepositioning itself in American critical infrastructure, likely for sabotage in the event of a military confrontation involving Taiwan. That's a serious concern, but for a brief moment the US appeared to have secured a cyber win. Speaking at the International Conference on Cyber Security in July last year, Kristina Walter, director of the NSA’s Cybersecurity Collaboration Center said Volt Typhoon's effort had "really failed". Speaking at the same event, the FBI's assistant director for cyber Brett Leatherman said "we equipped the entire private sector and US government to hunt for them and detect them".

This week however, operational technology cyber security firm Dragos released its 2026 Year in Review report, which warned the group is still active and continues to attack US utility firms. Dragos CEO, Rob Lee, told The Record, "they're still absolutely mapping out and getting into [and] embedding in US infrastructure, as well as across our allies".

The report also described the rise of a new group it calls Sylvanite that carries out "large-scale initial access operations" which it then hands off to other groups including Volt Typhoon. Dragos has seen the group targeting the electricity, water and oil and gas sectors in multiple regions including North America, Europe, the United Kingdom and Guam.

Colour us totally unsurprised.

State-backed hackers don't compromise US critical infrastructure on a whim. It's part of a broader plan to achieve state goals. Unless that overall strategy changes, a hacking campaign doesn't disappear, it evolves.

Granted, the US has scored some significant wins against Volt Typhoon, such as disrupting the KV botnet the group was using in 2024. But China's broader strategic calculus remains. Military action around Taiwan is still on the cards and, for Beijing, dedicating time and effort to be able to meddle with US critical infrastructure remains a good investment.

One problem for the US government is that a lot of critical infrastructure is privately owned. Without the regulatory tools to order operators to take action against Volt Typhoon, the government can only encourage them to act.

So prematurely declaring victory is pretty counterproductive. Why would a private sector operator spend time and resources looking for and countering Volt Typhoon when the government says its campaign is a bust?

The US has conducted operations that have significantly impacted Volt Typhoon and we expect that there will be more. That's good, but on its own it's not enough. There needs to be private sector cooperation. The ostrich strategy of pretending the threat simply does not exist isn't the way to get it.

Watch Amberleigh Jack and Tom Uren discuss this edition of the newsletter:

Three Reasons to Be Cheerful This Week:

- Embedded security scanning: Anthropic announced that it is rolling out Claude Code Security, a new Claude Code feature that will scan codebases for vulnerabilities and suggest solutions for human review. The company says that it has been using the feature on its own code and has found it to be "extremely effective" at securing its own systems. It is now available in a limited research preview.

- ASD releases malware analysis tool: The Australian Signals Directorate has open sourced its large-scale malware analysis tool, Azul. It says the tool can "explore, analyse and correlate malware at scale" and is designed to store tens of millions of samples.

- Rogue OpenClaw deletes AI alignment researcher's email: Summer Yue, a director in a Meta AI lab, posted about her experience with OpenClaw mass-deleting emails from her inbox. Her archiving strategy had worked well on a small toy inbox, but choked when presented with her far larger real inbox. Schadenfreude is kinda like cheer, isn't it? Good on Yue for having the guts to post about this.

Sponsor Section

In this Risky Business sponsor interview, Casey Ellis and Feross Aboukhadijeh discuss how AI is affecting open source, chat about a few attacks the company has seen in the wild and introduce Socket’s answer to the smouldering trashfire: Socket Firewall.

Shorts

Anthropic Victim of Distillation Attacks

Anthropic reported this week that it had identified "industrial-scale" distillation attacks. This follows-on from our story last week about OpenAI and Google reporting on the problem.

The company called out three Chinese AI labs: DeepSeek, which was identified by OpenAI, as well as Moonshot and MiniMax. It found that DeepSeek was using Claude to try and develop censorship capabilities:

We also observed tasks in which Claude was used to generate censorship-safe alternatives to politically sensitive queries like questions about dissidents, party leaders, or authoritarianism, likely in order to train DeepSeek’s own models to steer conversations away from censored topics.

Again, as per last week's reports, this is a direct call for government assistance to maintain America's lead in AI.

Risky Biz Talks

You can find the audio edition of this newsletter and other fine podcasts and interviews in the Risky Biz News feed (RSS, iTunes or Spotify).

In our last "Between Two Nerds" discussion Tom Uren and The Grugq talk about how ‘professional’ Five Eyes cyber espionage agencies like NSA will use AI. These agencies place a premium on stealth and won’t yolo AI.

Or watch it on YouTube!

From Risky Bulletin:

Russia starts criminal probe of Telegram founder Pavel Durov: Russian authorities have launched a criminal investigation of Telegram founder and CEO Pavel Durov. He is allegedly charged with promoting and facilitating terrorist activity on the Telegram platform by failing to respond to law enforcement takedown requests.

The criminal probe was revealed in a long piece published on Tuesday by the official newspaper of the Russian government, the Rossiyskaya Gazeta.

Russian officials have accused Durov of choosing a "path of violence and permissiveness" by not cooperating with its law enforcement agencies.

[more on Risky Bulletin]

AI-driven hacking campaign breaches 600+ Fortinet devices: A Russian-speaking financially motivated threat actor has used commercial AI toolkits to hack more than 600 Fortinet firewalls.

The campaign began at the start of the year, around January 11, according to the AWS security team.

The attacker didn't exploit zero-days or older vulnerabilities. Instead, they targeted FortiGate devices that had their management ports exposed online, used weak passwords, and didn't have MFA enabled.

[more on Risky Bulletin]

RPKI infrastructure sits on shaky ground: The infrastructure that supports the Resource Public Key Infrastructure (RPKI) security standard is not as secure as one would believe and is prone to multiple attacks that could hinder or crash global internet routing.

A new research paper that will be presented next week at the Network and Distributed System Security (NDSS) Symposium looks at a type of server that is part of the RPKI infrastructure known as PP, standing for Publishing Point, and how attacking these servers can prevent routers from validating routing information.

[more on Risky Bulletin]